70% of Americans want to slow down AI development.

PauseAI US makes it happen.

Our local groups connect directly with lawmakers and organize highly visible local action. Help us fund another year of growth, hundreds of meetings with Congress, and thousands of constituent actions.

Bringing AI safety to US Lawmakers

Most congressional offices have not heard about the risk of human extinction from artificial intelligence. Many are not even aware of how unpopular AI is with voters. PauseAI US brings this message directly to lawmakers, from their own constituents.

In 2024–2025, we built the PauseAI US brand, developed our organizing infrastructure, and grew our local groups. In 2026, we are leveraging this volunteer base into constituent meetings with lawmakers.

Our local-volunteer-based model allows us to drive impact beyond our staff budget. To date, we have held 192 meetings with members of Congress, built 47 local groups across 29 states, and made bipartisan media appearances including on the New York Times, Fortune, and Bannon’s War Room.

We are raising $450,000 to sustain our operations for 1 year, hire an additional Organizing Director, and fund hundreds of constituent-led congressional meetings and high-visibility local action.

Media awareness and constituent-led local organizing can drive legislative action

Raise awareness that pausing is popular and possible

Most Americans have not heard that frontier AI can be paused. In mainstream media, at protests, and in everyday conversations, we bring Pause into the Overton Window. We lead with a big ask: Pause AI. If we pause, we win. If we fall short and get strong regulation, that’s still a better world.

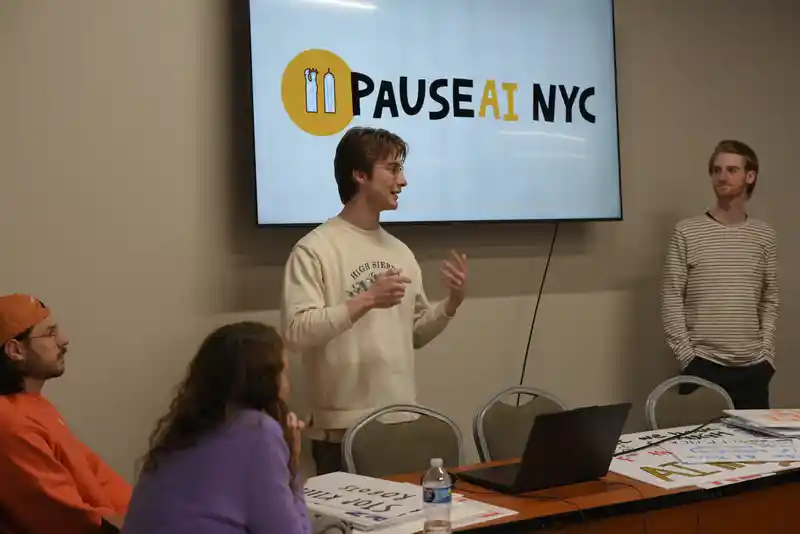

Build volunteer-led local groups

PauseAI US recruits and trains local leaders to organize public outreach in their city. We equip them to run events, collect petitions, and set up constituent meetings in their districts. We have founded 47 local groups across 23 states.

Connect constituents directly with their representatives

We train and equip volunteers on grassroots lobbying so they can show up in person and demand a pause. We believe constituents speaking plainly and directly to their representatives about the risk of human extinction from AI will be critical in enacting legislation. We also run the digital infrastructure that makes it easy for any supporter to call or email lawmakers. To date, we have held 192 meetings with lawmakers, and driven over 4,000 calls and emails.

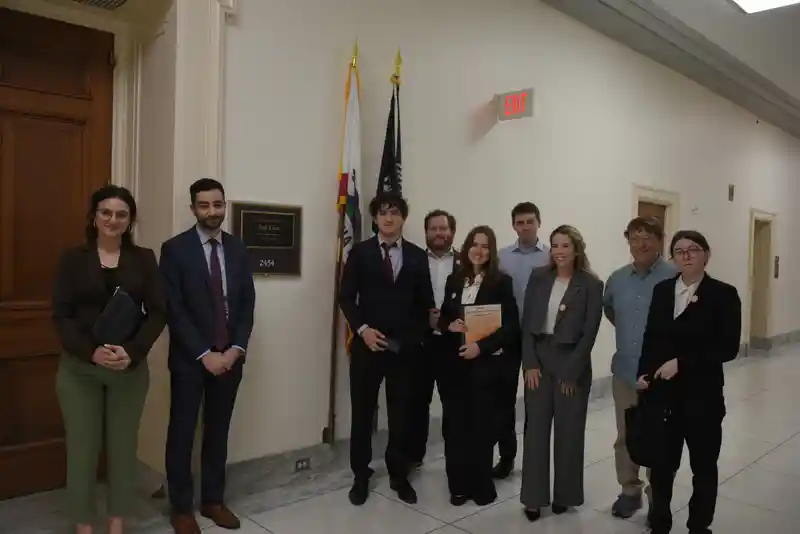

Snapshot of PauseCon DC 2026

In April 2026, PauseAI US volunteers from 18 states traveled to DC and held 74 meetings with congressional offices. This event is an example of how grassroots lobbying allows PauseAI US to punch above our weight and budget.

Following PauseCon, PauseAI US will support attendees in scheduling meetings in their home districts, and then host an even larger PauseCon DC later in 2026.

Direct lobbying

In addition to PauseCon, PauseAI US has hosted an additional 41 volunteer-led meetings and 75 direct lobbying meetings. Most were introductory, serving to acquaint legislators with the risks of powerful AI. We have also initiated a bipartisan, bicameral letter to Secretary Rubio calling for the US to lead negotiations on an AI treaty to prevent superintelligence. 15 congressional offices have expressed strong interest in signing, pending identification of a lead sponsor.

Scaling digital advocacy

We have driven over 4,000 calls and emails to lawmakers to date, with ~2,000 in the last quarter alone, in support of an International Treaty to Pause Frontier AI, and other strong AI Safety bills such as the bipartisan AI Risk Evaluation Act. Our reach is also expanding; our email subscriber count grew 77% in the last quarter.

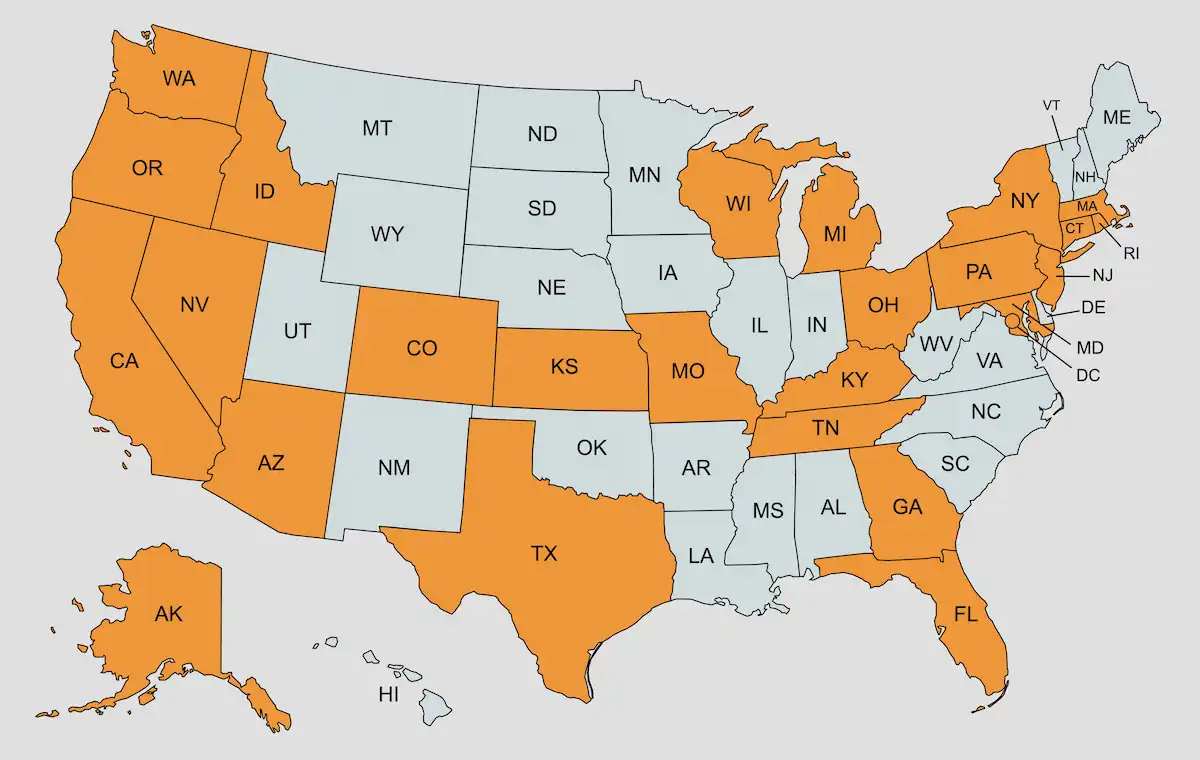

47 groups across 29 states

PauseAI US recruits, trains, and supports leaders to run local groups. We have built the largest community of AI safety activists in the country.

Local groups are how we get outsize leverage on every dollar donated. Contributions support local groups in their constituent meetings, calls to lawmakers, petition drives, protests, and educational outreach.

PauseAI US SF

Held the first-ever AI safety protest outside OpenAI in 2023 — before most Americans had heard of frontier AI risk — and has continued to lead high-profile demonstrations outside AI companies.

A single-issue voice that reaches both sides of the aisle

PauseAI US is a single-issue group, bringing a message of AI safety to outlets across the political aisle. We have been featured across a wide variety of publications.

In addition to widespread media coverage, PauseAI US Executive Director Holly Elmore has appeared on panels alongside Yoshua Bengio, Max Tegmark, and California Assemblymember Rebecca Bauer-Kahan representing PauseAI US.

Leveraging momentum into 200+ meetings, 25K contacts, high-reach media

We are raising $450,000

To sustain existing operations for another year and hire a second Organizing Director, extending our reach across more local groups and more media channels.

Donate NowSources for “70% of Americans want to slow down AI development”

The 70% figure reflects a conservative synthesis of public polling over the last several years. A selection of supporting evidence:

80% of U.S. adults believe the government should maintain rules for AI safety and data security, even if it means developing AI capabilities more slowly. This view is held by 88% of Democrats and 79% of Republicans and Independents.

69% agree that superintelligence should be prohibited until there is broad scientific consensus it can be developed safely and controllably — versus just 9% who disagree. 80% of voters support keeping humans in charge of AI, with strong oversight, clear limits, and corporate accountability — versus just 10% who favor fast, lightly regulated development. 77% believe AI must stay under human control, with people deciding what to delegate and retaining the ability to stop systems when needed — versus just 11% who prefer giving AI more independence for speed and scale.

Almost two-thirds (64%) feel that superhuman AI should not be developed until it is proven safe and controllable, or should never be developed.

72% of voters prefer slowing down the development of AI compared to just 8% who prefer speeding development up.

64% of registered voters want the government to regulate artificial intelligence.

72% of Americans want more regulation of the AI industry, a 15-point increase since a similar poll a year earlier. This includes 83% of Harris supporters and 66% of Trump supporters.

75% of Democrats and 75% of Republicans believe that “taking a careful, controlled approach” to AI by preventing the release of tools that terrorists and foreign adversaries could use against the U.S. is preferable to “moving forward on AI as fast as possible to be the first country to get extremely powerful AI.”

71% support public campaigns to slow AI development. 71% support government regulation that slows AI development. 63% support banning the development of artificial general intelligence that is smarter than humans. 64% support a global ban on data centers that are large enough to train AI systems that are smarter than humans.