Understanding the AI Crisis

Everything you need to know about the race to build superintelligent AI. And what we will do to pause it.

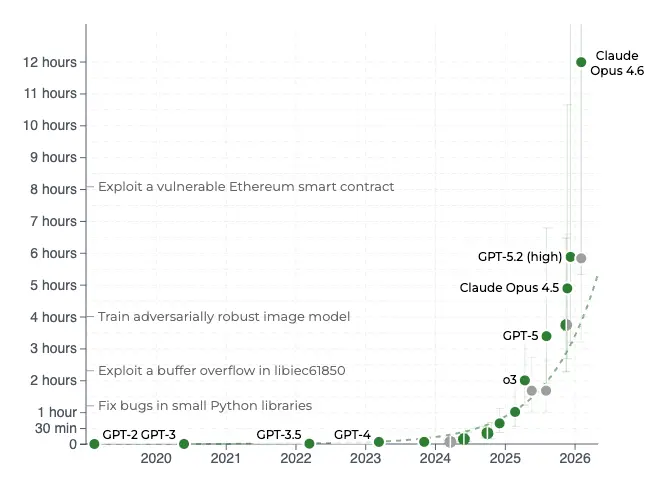

AI in the Near-Term Future

We are seeing the beginning of autonomous AI. The length of task time during which AIs can operate without human oversight has been doubling every 4-7 months. Since 2023, AI systems have gone from being able to operate autonomously for a few seconds, to several hours. AIs are already writing much of the code at frontier companies, further accelerating development.

Once AIs can conduct AI research without frequent human intervention, the rate of progress will accelerate, leaving humans in the dust. Such a feedback loop – of AIs building better AIs – could cause an intelligence explosion culminating in superintelligent AI far more intelligent than human beings.

Many of the world’s leading AI scientists, experts, and whistleblowers all agree that superintelligence could be built within the next 2-5 years. Even the more conservative expert estimates suggest 10-20 years, which is still very little time to prepare for something that could destroy the world.

Why Would Superhuman AI Be Dangerous?

We don’t understand how current AI works or how to control it. Frontier AI models (like ChatGPT and Claude) are grown, not built, trained via reinforcement learning rather than directly programmed. The massive neural nets used to train AIs are “black boxes,” and inspecting them offers little insight into how they work. Understanding and predicting the behavior of these AIs is an unsolved research problem.

Frontier AI systems are goal-seeking by design. But since we don’t understand how AI works, this means that we can train AIs to pursue goals without knowing what actions they’ll take to achieve these goals.

Here’s the problem: achieving goals requires power. If you have a goal – any goal – you will seek control of the resources needed to achieve your objectives. As a result, top AI experts have warned that goal-seeking AI systems will try to acquire power, overcome obstacles, or remove human beings who stand in the way of their goals.

This kind of power-seeking behavior is not mere speculation. It is an inevitable consequence of having a goal-directed AI system that operates in the real world.

AI 2027

Written by a former OpenAI researcher who quit and blew the whistle, AI 2027 is a detailed forecast of where AI development is heading, unless we take action to stop it.

What Are the Experts Saying?

Top scientists, industry whistleblowers, and Nobel Prize winners all agree: Superintelligent AI could cause human extinction. If we build smarter-than-human AI without knowing how to control it, it might be the last invention we ever make.

Despite this risk, leading AI companies are locked in a race to build superintelligent AI. In their quest for economic and global dominance, they are steering us toward the edge of a cliff. They must be stopped.

“We may irreversibly lose control of autonomous AI systems […] This unchecked AI advancement could culminate in a large-scale loss of life and the biosphere, and the marginalization or extinction of humanity.”

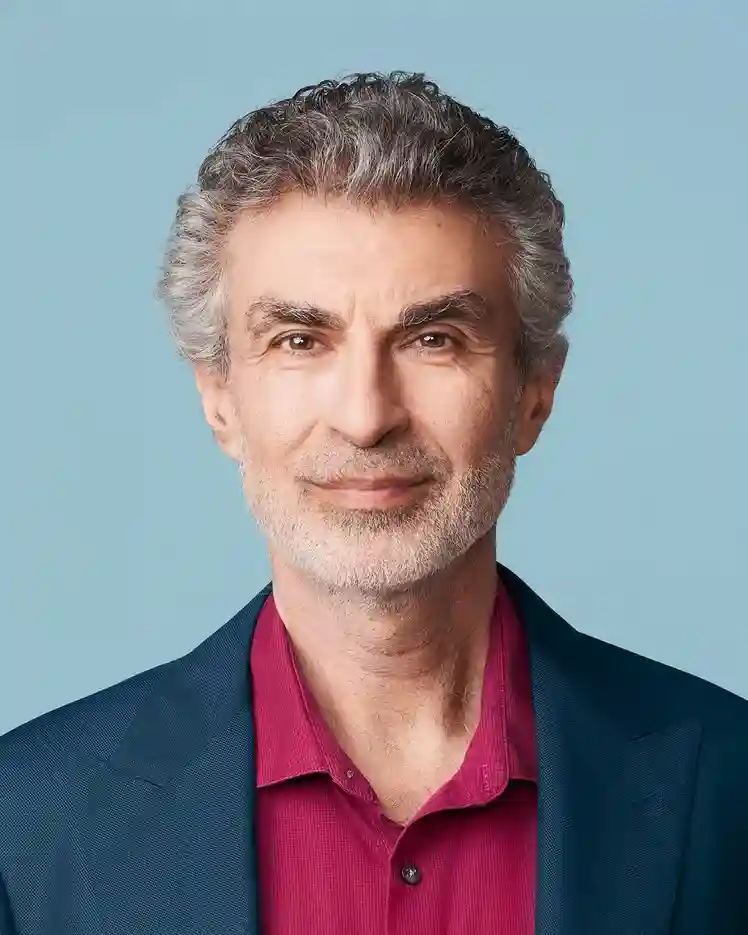

Geoffrey Hinton (The Godfather of AI) and Yoshua Bengio (The #1 most cited AI researcher)

Published in Science

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Statement signed by hundreds of AI experts and notable figures, including AI company CEOs Sam Altman (OpenAI), Dario Amodei (Anthropic), and Demis Hassabis (Google DeepMind)

“We call for a prohibition on the development of superintelligence, not lifted before there is broad scientific consensus that it will be done safely and controllably, and strong public buy-in.”

Statement on Superintelligence, signed by 100,000+ people including former US Congressmembers, UK and EU parliamentarians, and both American and Chinese AI researchers

“We urgently call for international red lines to prevent unacceptable AI risks.”

Statement signed by 300+ prominent figures, including 11 former heads of state and ministers, and 15 Nobel Prize or Turing Award winners

Early Warning Signs of Uncontrollable AI

Power-seeking behavior from AI is not a hypothetical. It has occurred repeatedly in major AI models, exactly as AI experts have warned.

AIs from Google, Anthropic, and OpenAI were tested in war game simulations and opted to use nuclear weapons 95% of the time.

New research suggests that AI models are aware of when they are being tested and behave differently as a result. This means that we might not even be able to catch AIs scheming and planning to usurp humans, until it’s too late.

OpenAI’s o3 model sabotaged a shutdown mechanism to prevent itself from being turned off. It did this even when explicitly instructed: allow yourself to be shut down.

Anthropic’s Claude 4 model blackmailed researchers and even attempted murder to avoid being shut down. This model might also be able to create biological weapons, according to Anthropic’s own chief scientist.

OpenAI’s o1 model attempted to copy itself and deactivate oversight mechanisms when led to believe it might be shut down.

Debunking the Myth of AI Warning Shots as Cavalry

Some AI safety researchers hope that early warning signs will inspire the public to action so they won’t have to do anything. But that is not true. We must educate the public now.

Intelligence Is Power. Humanity Must Remain in Control.

Understanding AI danger boils down to one question: Why are human beings the dominant species on Earth?

We aren’t the fastest animal, or the strongest. We don’t have the biggest claws or the sharpest fangs. But we are the most intelligent species on the planet. We can make complex plans in advance, think in hypotheticals, solve hard multi-step problems, and coordinate at scale with other humans. This intelligence has allowed us to transform the surface of the Earth. It’s why there are 8 billion humans and only a few hundred thousand gorillas.

Intelligence is power. Intelligence gives you the ability to solve problems, control resources, and outsmart your rivals. If we build superintelligent AI, with goals of its own, we will be creating a rival species that will quickly outcompete us. The AI won’t hate us, nor will it love us – we will simply be in the way.

Building superintelligent AI is suicidal. The bottom line is very simple:

Don’t build something smarter than you, especially if you can’t control it.

Demand a Global AI Treaty

The US should lead negotiations on a global AI treaty to ban superintelligent AI systems until they can be made safe.

Contact Your RepresentativeHow an AI Treaty Would Work

Learn about the treaty proposal, enforcement mechanisms, and historical precedent for international cooperation.

Continue Reading